The U.S. Department of the Treasury and financial industry regulators have issued coordinated guidance formalizing data provenance, model lineage, and auditability requirements for AI systems deployed in banking-arriving as major institutions scale autonomous AI workflow agents across core operations.

Background

On February 19, 2026, the U.S. Department of the Treasury released two foundational resources: a shared Artificial Intelligence Lexicon and the Financial Services AI Risk Management Framework (FS AI RMF), the first of six planned deliverables from the AI Executive Oversight Group (AIEOG). The AIEOG is a public-private partnership established by Treasury in collaboration with the Financial and Banking Information Infrastructure Committee (FBIIC) and the Financial Services Sector Coordinating Council (FSSCC).

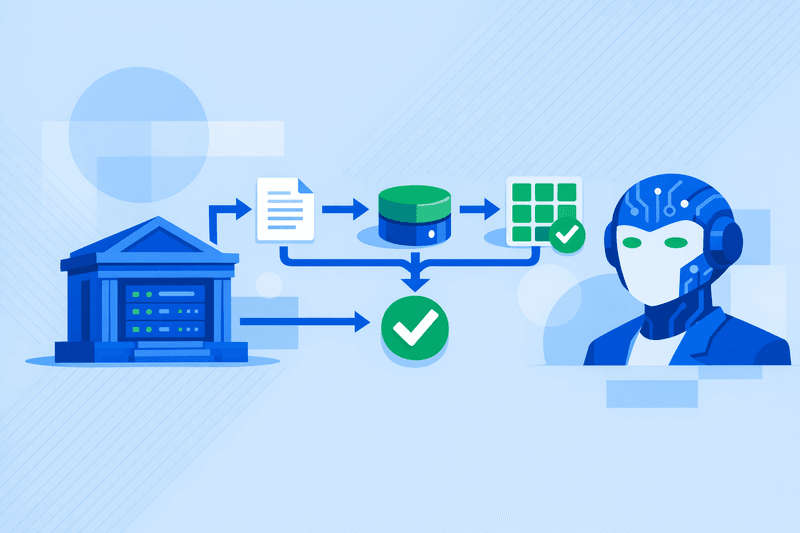

The release coincided with rapid agentic AI deployment across the financial sector. Major global banks-including BNY, Citi, and JPMorgan-have integrated AI agents into critical workflows. BNY has reportedly issued login credentials to more than 100 AI agents operating under human supervision, Citi uses similar systems for operational tasks, and JPMorgan deploys AI to process and analyze thousands of legal documents.

Despite this acceleration, a significant governance gap persists. No dedicated compliance framework currently governs liability or accountability when these agents produce errors. A Capgemini Research Institute survey of 1,100 executives across 14 markets found that 96% of financial services leaders view regulatory and compliance uncertainty as the primary barrier to scaling AI agents.

Details

The FS AI RMF introduces 230 control objectives mapped to varying stages of AI adoption, spanning governance, data practices, model development, validation, monitoring, third-party risk, and consumer protection. The framework adapts the National Institute of Standards and Technology's (NIST) AI Risk Management Framework to the regulatory and operational environment of financial institutions. It provides guidance for evaluating AI use cases, managing lifecycle risks, and integrating AI governance into existing enterprise risk programs.

Among the most operationally demanding requirements are those targeting data provenance. Controls address lineage tracking, feature store governance, and training data documentation. Effective implementation requires automated lineage logging, dataset version control, and rights-signal propagation at ingestion-with data sensitivity tagging required at intake so training sets are privacy-aware by design. Additional control objectives mandate documented model development, bias testing, validation independence, drift detection, and explainability thresholds, all of which must be embedded in MLOps pipelines.

The AI Lexicon formally defines data lineage as "the history of processing of a data element, which may include point-to-point data flows and the data actions performed upon the data element." It also standardizes terms such as "hallucination" and "model lineage," ensuring that IT, compliance, and vendor teams share a common vocabulary.

Simultaneously, FINRA's 2026 Annual Regulatory Oversight Report introduced dedicated guidance on generative AI agents. FINRA identified data provenance as a specific cybersecurity concern, noting that firms' technology tools, data provenance, and processes must identify how threat actors use AI or GenAI against the firm or its customers. The report separately flags that complex, multi-step agent reasoning tasks make outcomes difficult to trace or explain, complicating auditability. Agents operating on sensitive data may also unintentionally store, expose, disclose, or misuse sensitive or proprietary information.

FINRA's report clarifies that its rules "and the securities laws more generally, continue to apply when firms use GenAI or similar technologies in the course of their businesses." Existing rules on supervision, communications, recordkeeping, and fair dealing apply to broker-dealer AI deployments.

Treasury stated that the FS AI RMF is scalable, allowing both community banks and multinational institutions to tailor implementation based on size and complexity. However, legal analysts caution that the practical effect may exceed its advisory framing. Once examiners and internal auditors have a 230-point checklist, it effectively becomes the de facto benchmark regardless of its non-binding characterization.

For AI vendors supplying workflow automation and model infrastructure to banks, the compliance burden is direct. Where institutions negotiate AI procurement or renewal agreements in 2026, these new tools provide a structured basis for the coherent, comprehensive approach regulators increasingly expect-encompassing visibility into vendor model and data practices, audit and testing rights, update and change controls, incident response coordination, and clear allocation of responsibilities.

Outlook

The AI Lexicon and FS AI RMF are part of a coordinated series of AIEOG deliverables addressing identity, fraud, explainability, and data practices, with four additional resources still forthcoming. These developments signal that AI governance is now formally on the agenda of U.S. financial authorities, regulators, and private industry groups. Though styled as non-binding, the guidance may drive increased scrutiny and establish standards against which financial services firms are evaluated. State-level regulators are also moving independently: New York's Department of Financial Services has imposed strict model governance requirements for insurers and banks using AI, mandating bias testing, documentation, and executive accountability.