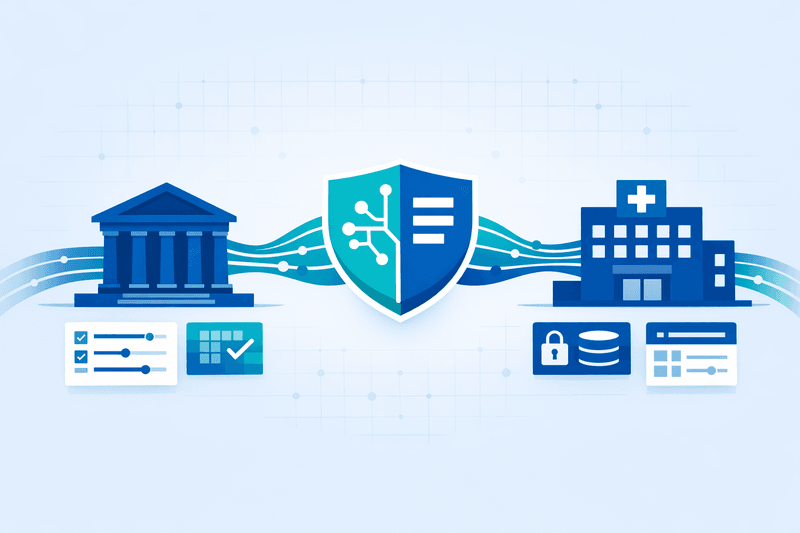

Regulators in the U.S. and Europe are moving toward standardized AI data provenance requirements in banking and healthcare, prompting enterprise software vendors to accelerate delivery of data clean room capabilities and governance controls designed to meet audit and compliance demands in two of the most scrutinized industries.

Background

The regulatory pressure stems from a compounding set of frameworks that have activated in quick succession. As of August 2025, obligations for general-purpose AI (GPAI) models under the EU AI Act have taken effect, requiring foundation model providers to publish detailed summaries of training data and compelling downstream users to verify that deployed systems do not fall into prohibited categories. In the United States, federal banking regulators have adopted a parallel posture. The OCC, Federal Reserve, and CFPB have consistently emphasized that explainability and transparency are compliance requirements, particularly when AI systems influence credit decisions subject to fair lending laws, according to analysis by Wolters Kluwer. A Q1 2026 Wolters Kluwer Banking Compliance AI Trend Report found that explainability and transparency (28.4%) and bias and discrimination were the most acute regulatory concerns cited by financial institutions.

Healthcare faces its own wave of sector-specific mandates. In September 2025, the Joint Commission partnered with the Coalition for Health AI (CHAI) to release the first comprehensive guidance for responsible AI adoption across U.S. health systems - an accrediting body covering over 23,000 healthcare organizations, partnered with a coalition representing nearly 3,000 member organizations. The FDA has also acted, issuing draft guidance in 2025 for AI-enabled devices focused on documentation, transparency, bias prevention, and post-market monitoring. Regulators increasingly frame AI provenance - the documented trail of training data origin, labeling, validation, and updates - as a first-order compliance obligation rather than a technical best practice.

Details

The push for unified provenance standards has exposed a structural gap in how AI data is currently tracked. When an AI system processes protected health information to generate a prior authorization request or recommend a treatment protocol, every step of that process must be traceable, explainable, and auditable - including the data that informed the decision, the model version that generated the output, and the human oversight that validated the result, according to reporting on healthcare AI governance.

State-level activity compounds federal pressure. As of early 2026, 43 states have introduced over 240 AI bills, and the Texas Responsible Artificial Intelligence Governance Act (TRAIGA), effective January 1, 2026, requires disclosures when government agencies and healthcare providers use AI systems that interact with consumers. The American Hospital Association has warned regulators that the existing patchwork of state and federal health information privacy requirements remains a significant barrier to data sharing, and that it can inhibit the development and deployment of AI tools, given that data drives algorithm validity.

Vendors are responding by embedding provenance and governance controls into data clean room architectures. Databricks Clean Rooms, now generally available on AWS and Azure, include HIPAA compliance features for healthcare organizations processing sensitive patient data, federated sharing across clouds, and management APIs for automation. Decentriq, which uses confidential computing and encryption technologies, is positioned specifically for highly regulated sectors including banking and healthcare, enabling secure data analytics, risk assessment, and collaboration without exposing raw information, according to Gartner Peer Insights. Snowflake enables clean room participants to define specific rules about which operations can be performed on their data, who can run them, and what the responses can be, with regulatory compliance responsibilities falling on participants.

On the banking side, NIST released a preliminary draft of an AI Cybersecurity Risk Profile in December 2025 that harmonizes more than 2,500 regulatory expectations from agencies including the Federal Reserve, OCC, and FDIC into a concise set of diagnostic statements - a development American Banker described as offering banks a new roadmap for integrating AI into existing cybersecurity strategies. The profile allows a bank to assess its compliance posture once and report to multiple regulators simultaneously.

Outlook

Whether federal regulators will converge on a single provenance standard remains uncertain, particularly given the U.S. administration's deregulatory posture. On December 11, 2025, President Trump signed an executive order titled "Ensuring a National Policy Framework for Artificial Intelligence," aimed at preempting state AI laws and establishing a single national framework. However, state enforcement has continued unabated - Massachusetts announced a $2.5 million settlement tied to AI-model-related disparate impact outcomes in banking - and the EU AI Act's extraterritorial reach means multinational banks and health systems must comply regardless. Enterprise procurement decisions are consequently shifting toward vendors that can demonstrate audit-ready lineage, clean room isolation, and cross-jurisdictional governance controls as baseline capabilities.