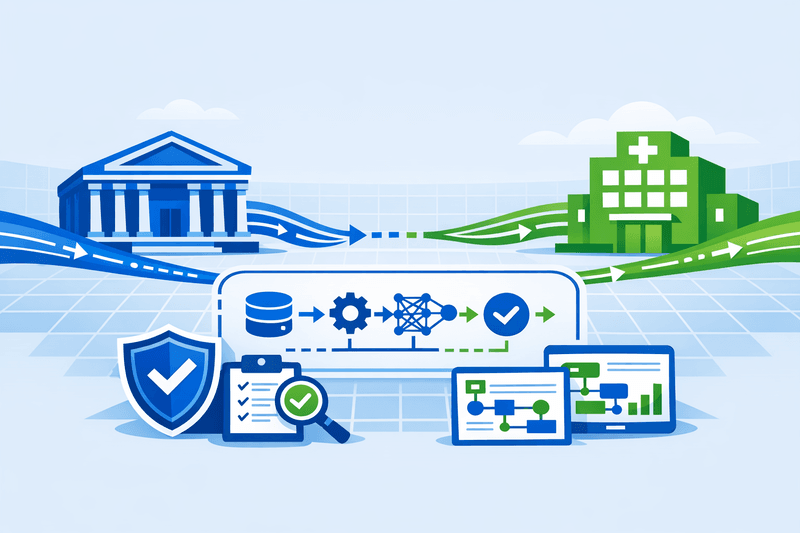

Most AI governance conversations focus on the model. The data that feeds it - where it came from, how it was transformed, who accessed it - has remained largely undocumented in regulated sectors. A new cross-sector regulatory proposal aims to change that.

U.S. financial regulators and health oversight bodies have circulated a proposed framework to standardize AI data provenance across banks and health systems. The proposal arrives at an inflection point: on April 17, 2026, the Federal Reserve, FDIC, and OCC replaced decades-old model risk rules with a more risk-based, principles-driven framework for model risk management. Yet that revised guidance explicitly set aside generative and agentic AI - the 2026 MRM Guidance clarifies that "generative AI and agentic AI models are novel and rapidly evolving" and are "not within the scope of this guidance," with agencies committing to release a separate request for information on AI use in banking "in the near future."

The provenance proposal, now open for public comment, is intended to fill that gap - establishing consistent standards for tracking the origins, transformations, and usage of data powering AI models in credit risk scoring, fraud detection, clinical decision support, and patient data analytics.

What the Proposal Requires

The framework centers on four interconnected requirements. Together, they represent the most comprehensive federal attempt to make AI decision-making auditable from the data layer up.

{{component:data_table_placeholder}}

1. Harmonized Data Lineage Schema Organizations would be required to catalog data origin, data quality metrics, and each transformation step applied to data used in AI model inputs. Strong AI data management involves versioning training datasets, labeling data with appropriate metadata, and maintaining logs of data access and transformations in AI workflows. The proposal converts this from aspiration to obligation.

2. Governance Controls The framework mandates role-based access controls, encryption, anomaly detection, and mechanisms to verify data source trustworthiness before data enters a model pipeline. Organizations must ensure responsible management of patient data used in AI systems, with particular attention to data provenance, quality, and security.

3. Model Risk Management Integration Provenance records would need to connect directly to model validation processes, retraining triggers, and impact assessments. Development, validation, deployment, monitoring, and retirement are treated as one governed chain, with supervisors expecting lineage across every link - not snapshots at hand-off points. The shared expectation: evidence must be produced as a byproduct of how models are built, not reconstructed after the fact.

4. Incident Response Obligations Institutions would face escalation requirements for suspected data integrity issues or model performance degradation, with defined timelines for notifying regulators and internal risk management teams. Traceability, provenance, and explainability become compliance requirements - organizations must demonstrate how AI outputs are verified, monitored, and controlled.

The Banking Regulatory Context

The provenance proposal does not emerge in a vacuum. On April 17, 2026, the Federal Reserve, FDIC, and OCC replaced SR 11-7 and related BSA/AML issuances with a more explicitly risk-based, principles-driven framework for model risk management. That revision - now codified as SR 26-2 - represents the first overhaul of bank model risk rules in 15 years.

With 78% of banks now tactically adopting generative AI according to IBM's Global Banking Outlook, and the global AI-in-banking market reaching an estimated $45.59 billion in 2026, regulators chose to modernize rules for traditional models while declining to write rules for the tools banks are racing to deploy.

The result is a regulatory gap the provenance framework is designed to partially bridge. Banks deploying agentic AI for fraud detection, customer service orchestration, or trading support currently have no specific supervisory guidance - they must extrapolate principles from a framework that explicitly excludes their use case.

The OCC, Federal Reserve Board, and FDIC plan to issue a request for information addressing model risk management generally and considering, in particular, banks' use of AI, including generative AI, agentic AI, and AI-based models. The provenance standards proposal aligns with - and anticipates - that forthcoming RFI.

The Healthcare Regulatory Context

Healthcare faces a parallel governance challenge. 46% of U.S. healthcare organizations are currently implementing generative AI technologies, according to industry surveys. Yet the vast majority of medical AI is never reviewed by a federal regulator, creating significant liability exposure for organizations deploying AI systems without proper oversight frameworks.

In 2025, the FDA issued draft guidance for AI-enabled devices focused on documentation, transparency, bias prevention, and post-market monitoring. Rather than a one-time approval, the FDA's "total product lifecycle" approach recognizes that algorithms change and require continuous oversight.

In September 2025, the Joint Commission partnered with the Coalition for Health AI (CHAI) to release the first comprehensive guidance for responsible AI adoption across U.S. health systems - a landmark collaboration between the accrediting body for over 23,000 healthcare organizations and a coalition representing nearly 3,000 member organizations.

Regulators want to trace the origin of training data - to know how it was labeled, validated, and updated, and to ensure it represents the full range of patients it is meant to serve. The unified provenance proposal formalizes this expectation.

Related: For analysis of how data governance is becoming the operational foundation for AI deployment, see Data Governance Becomes the Foundation for Autonomous AI.

Industry Reaction: Support With Caveats

Stakeholder responses reflect the complexity of cross-sector rulemaking.

Banks welcome the clarity on lineage documentation but flag the risk of duplicative compliance efforts across multiple agencies. With SR 26-2 now in place and an AI-specific RFI pending, institutions face overlapping regulatory timelines. The banking industry's response to revised model risk rules has been split - model risk teams managing traditional statistical models have largely welcomed the update. The provenance overlay adds a new layer.

Health systems argue for phased implementation timelines, citing the sensitivity of patient data and the criticality of clinical workflows. Any disruption to clinical decision support systems carries patient safety implications that demand careful change management.

Privacy advocates push for explicit consent frameworks and robust data minimization requirements alongside provenance controls. Organizations must establish policies for sourcing data legally, ensuring consent where applicable, documenting data provenance, and verifying the accuracy, completeness, and diversity of AI training data to prevent biased outcomes.

Regulators have framed the proposal as a floor for auditability - not a ceiling on innovation. The broader federal approach recommends AI regulatory sandboxes and reliance on existing federal agencies rather than creation of a new standalone AI regulator.

What Organizations Should Do Now

Compliance timelines remain subject to the rulemaking process, but preparatory work can begin immediately. Organizations that build provenance infrastructure now will be better positioned to absorb formal requirements - and to demonstrate good-faith compliance readiness during supervisory review.

{{component:steps_placeholder}}

Use the readiness assessment below to evaluate where the organization stands across the five core compliance domains:

{{widget:readiness_widget_placeholder}}

The Broader Compliance Horizon

Stakeholders should anticipate continued incremental federal action alongside sustained state-level activity, reinforcing a hybrid regulatory environment. In healthcare in particular, AI policy is likely to continue evolving through agency action and congressional oversight. This dynamic underscores the importance of a dual-track engagement strategy - shaping emerging federal standards while remaining responsive to state-level developments.

For enterprise software and data platform vendors, the implications extend into product design. Vendor-supplied AI features now carry regulatory implications - model lifecycle management, drift monitoring, and bias detection require discipline. Contracts governing data-sharing arrangements and cross-institutional AI deployments will increasingly need provenance provisions embedded as standard terms.

Establishing AI governance and compliance programs now will mitigate risk and help organizations maximize their investment in AI. For banking and healthcare leaders, the data provenance proposal sends a clear signal: the era of AI operating above the audit trail is ending.

Frequently Asked Questions

What is AI data provenance? AI data provenance refers to the documented record of a dataset's origins, transformations, quality assessments, and usage within AI model pipelines. It enables organizations to trace any model output back to its source data and reconstruction path.

How does the banking provenance proposal relate to SR 26-2? SR 26-2 (the April 2026 revised MRM guidance) establishes principles-based model risk management for traditional bank models but explicitly excludes generative and agentic AI. The data provenance framework addresses that gap by establishing data-layer standards that apply across AI model types, ahead of the agencies' forthcoming AI-specific RFI.

What healthcare regulations already govern AI data quality? Existing frameworks include HIPAA/HITECH (for patient data protection), FDA guidance on AI/ML-enabled Software as a Medical Device (SaMD), and the Joint Commission/CHAI guidance on responsible AI adoption released in September 2025. The provenance proposal would establish additional, harmonized requirements specifically for AI data lineage.

Will smaller institutions be held to the same standards? Regulators have signaled that requirements should be proportional to institution size, complexity, and AI use. Community banks have already received explicit flexibility guidance under OCC Bulletin 2025-26. Health systems are similarly pushing for phased timelines. Proportionality is expected to be built into the final rulemaking.

What should procurement leaders do when evaluating AI vendors? Request documented data lineage capabilities from AI vendors, including how training data is cataloged, how transformations are logged, and how the vendor supports customer audit obligations. Embed provenance requirements into vendor contracts and data-sharing agreements before enforcement timelines are formalized.