Enterprises and regulators are converging on training-data governance as a non-negotiable discipline, as AI deployment moves from pilots into regulated, mission-critical operations across healthcare, financial services, and the public sector.

The shift stems from overlapping regulatory mandates that now impose direct obligations on how AI training datasets are sourced, documented, and maintained. Under the EU AI Act, general-purpose AI model obligations became applicable on 2 August 2025, with high-risk AI system requirements scheduled to apply from August 2026. A Commission-mandated template now requires providers to publish an overview of training data sources-including large datasets and top domain names-along with data processing details. In the United States, California law requires covered providers to publish a high-level summary of training data, including sources, data types, IP and personal information, and processing details, effective January 2026.

Background

Integrating AI into enterprise workflows has significantly expanded governance requirements. Traditional data governance frameworks, originally designed for analytics and reporting, are insufficient for AI applications and do not address AI-specific challenges such as probabilistic model behavior, training data provenance, model drift, and hallucinations in generative AI outputs.

The EU AI Act entered into force on 1 August 2024 and began phasing in substantive obligations from 2 February 2025. In the U.S., the policy landscape became volatile after the January 2025 revocation of Executive Order 14110. That policy divergence has created compliance complexity for multinationals. A December 2025 executive order introduced direct tension between federal policy and state data privacy law requirements-particularly around transparency and disclosure-with the federal position framing mandatory training data disclosure as potentially revealing trade secrets. State laws including California's privacy framework and Colorado's AI Act remain enforceable.

Proactive organizations are already aligning with international standards such as ISO/IEC 42001 and the NIST AI Risk Management Framework to stay ahead of compliance demands.

Details

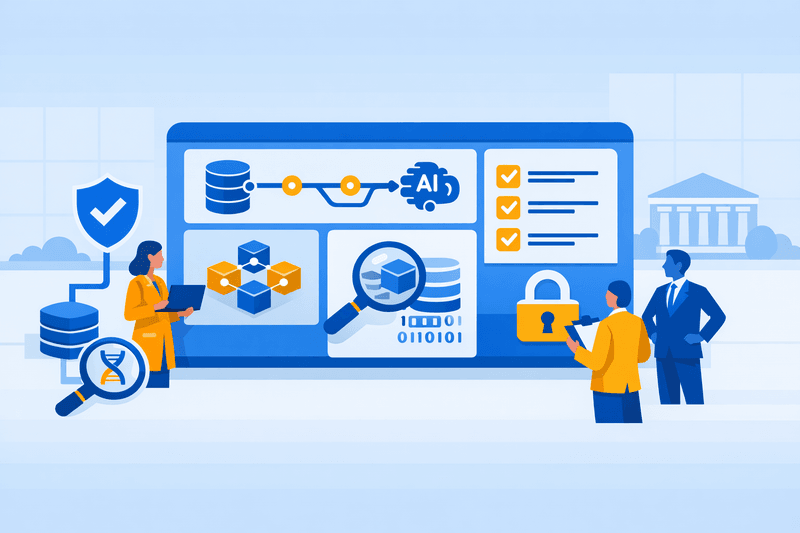

Training data governance now encompasses four core disciplines: provenance and lineage tracking, bias detection and mitigation, lifecycle management, and access control.

On provenance, AI training data must be auditable, licensed, and privacy-compliant, with lineage tracked from raw source to preprocessed input-including metadata on collection method, consent status, source system, and enrichment steps. According to a Gartner report, by 2026, 60% of large enterprises will have deployed data lineage tools to address regulatory and operational risk, up from just 20% in 2023.

Bias mitigation is now explicitly tied to training data governance. If training data contains historical biases based on race, gender, geography, or socioeconomic status, AI models will not only replicate but often amplify them. An IBM study found that 68% of business leaders are concerned about bias in AI outputs, yet only 35% have mechanisms in place to actively detect or mitigate it. The EU AI Act stipulates that training, validation, and testing datasets must be relevant, sufficiently representative, and-to the best extent possible-free of errors and complete in view of the intended purpose, with appropriate statistical properties.

Synthetic data has emerged as a primary tool for balancing privacy obligations against training data volume requirements. In 2025, synthetic data moved from experimental to enterprise-ready, driven by stronger privacy rules, commercial tooling, and growing evidence that carefully crafted synthetic data can unlock innovation without exposing real individuals. In healthcare, synthetic patient data enables testing treatment plans at scale without exposing medical records. In financial services, synthetic transaction logs allow analysts to generate adversarial scenarios and test model resilience without exposing customer records. Gartner expects synthetic data to make up roughly three-quarters of data used in AI projects by 2026, according to industry analyses cited by enterprise consultants. Because synthetic data is pervasive and realistic, it can become indistinguishable from authentic sources-and if the underlying data is biased, the results may reinforce rather than reduce inequities.

In multi-vendor AI ecosystems, supply chain governance has become a distinct challenge. Enterprises are advised to vet all third-party model providers, vector platforms, and LLM APIs, requiring documentation on training data, licensing, security practices, and risk mitigation, with termination clauses for non-compliance. The U.S. Office of Management and Budget memo M-26-04, issued in December 2025, requires federal agencies purchasing large language models to request model cards, evaluation artifacts, and acceptable use policies by March 2026.

Cross-border data flows add further complexity. For international AI projects, cross-border data transfer restrictions can complicate collaboration, with synthetic data offering one route to sharing training-relevant datasets globally without triggering jurisdiction-specific regulatory constraints.

Outlook

In many organizations, legal reviews still occur post-deployment, privacy assessments remain static checkboxes, and security sign-offs do not account for the dynamic nature of AI models. This reactive posture is no longer tenable under evolving regulatory mandates. As legal scrutiny around copyrighted training material intensifies, new compliance frameworks are likely to accelerate demand for transparent, auditable data supply chains-potentially altering vendor dynamics and favoring providers with verified, enterprise-grade data governance models. With both the EU AI Act high-risk system deadline and Colorado's algorithmic discrimination requirements arriving in 2026, enterprise AI and legal teams face a narrowing window to formalize training data lifecycle controls before enforcement exposure materializes.