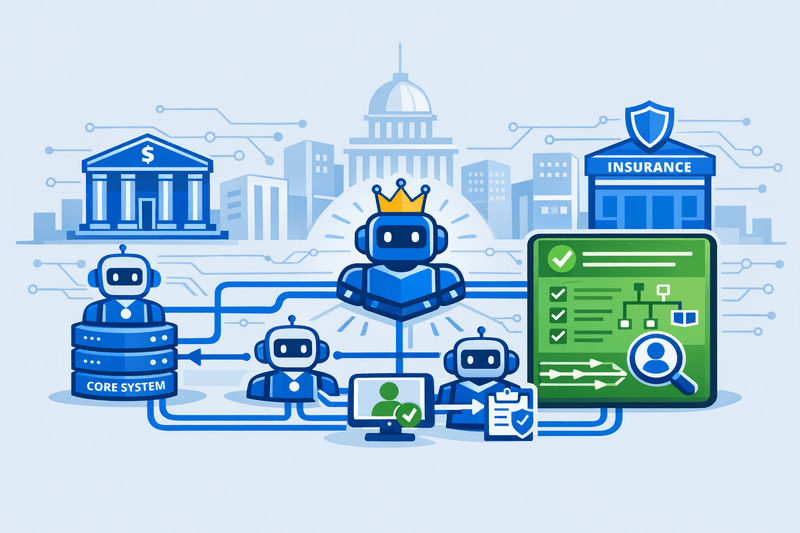

Executive summary. US federal and state regulators are expanding AI oversight in banking and insurance from individual models to end-to-end workflows executed by AI agents. As frameworks like NIST's AI Risk Management Framework (AI RMF) and the US Treasury's Financial Services AI Risk Management Framework (FS AI RMF) are adopted, regulatory expectations are converging around interoperable data schemas, standardized agent metadata, and auditable workflow controls. The regulatory direction is increasingly clear: AI workflow agents must function within governed, interoperable environments that maintain human oversight and enforce risk boundaries.

Regulators Shift from Model Risk to Workflow Agent Interoperability

Initially, US financial sector AI regulation addressed model risk and consumer protection, treating machine learning as an extension of traditional statistical models. The scope now includes autonomous and semi-autonomous workflow agents that coordinate decisions across systems and organizations.

On January 26, 2023, the National Institute of Standards and Technology (NIST) released AI RMF 1.0 as a voluntary, cross-sector Artificial Intelligence Risk Management Framework.1NIST AI Risk Management Framework (AI RMF 1.0) Launch | NIST The framework defines four functions-GOVERN, MAP, MEASURE, MANAGE-used by many financial institutions to document AI risks.

In February 2026, the US Department of the Treasury published the Financial Services AI Risk Management Framework (FS AI RMF) and an AI Lexicon, adapting NIST's AI RMF for financial services.2Treasury Releases Two New Resources to Guide AI Use in the Financial Sector | U.S. Department of the Treasury Treasury's materials prioritize consistent terminology, lifecycle risk management, and integration with supervisory expectations, such as model risk management guidance (e.g., SR 11-7) and consumer-protection rules.3SR 11-7 Model Risk Management for AI — GLACIS

State-level insurance supervision is advancing quickly.

The National Association of Insurance Commissioners (NAIC) adopted its Artificial Intelligence Model Bulletin in December 2023, providing a uniform framework for evaluating insurers' use of AI and big data, including governance, data controls, and oversight of third-party AI systems.4March 2025 The bulletin requires insurers to inventory AI systems, document roles and responsibilities, and demonstrate data lineage and protection measures.

Colorado's Regulation 10-1-1, effective November 14, 2023, is the first binding state regulation requiring life insurers using external consumer data, algorithms, and predictive models to implement formal governance and risk management, including documented model inventories and ongoing monitoring.5Regulation 10-1-1 - GOVERNANCE AND RISK MANAGEMENT FRAMEWORK REQUIREMENTS FOR LIFE INSURERS' USE OF EXTERNAL CONSUMER DATA AND INFORMATION SOURCES, ALGORITHMS, AND PREDICTIVE MODELS | State Regulations | US Law | LII / Legal Information Institute This regulation promotes interoperability by requiring insurers to explain how algorithms interact with data sources and downstream processes.

Requirements for human oversight are also increasing.

Florida's House Bill 527 (Mandatory Human Reviews of Insurance Claim Denials), advancing through the legislature in early 2026, would prohibit property and health insurers from denying or reducing a claim based solely on AI, machine learning, or algorithmic outputs and require a qualified human to make the final decision with supporting documentation.6House Bill 527 (2026) Similar provisions have appeared in previous Senate analyses and related proposals.7Florida Bill Would Limit AI in Claims Denials, Extend Bar on Execs, Raise Surplus Levels

Regulatory signals indicate that state-level AI and data-protection statutes for lending, employment, and consumer rights will extend to AI-driven decisions in banking and insurance, tightening expectations around explainability, bias controls, and data governance for AI workflows.8The Evolving Landscape of AI Regulation in Financial Services | Insights & Resources | Goodwin

Key regulatory instruments shaping AI workflow agents

| Instrument / jurisdiction | Primary AI focus in financial services | Implications for workflow agents |

|---|---|---|

| NIST AI RMF 1.0 (US, cross-sector) | Voluntary, risk-based framework (GOVERN/MAP/MEASURE/MANAGE) for trustworthy AI across the lifecycle. | Encourages institutions to treat agentic workflows as AI "systems" requiring risk identification, measurement, and controls, beyond model tuning.1NIST AI Risk Management Framework (AI RMF 1.0) Launch |

| US Treasury FS AI RMF (2026) | Sector-specific adaptation of NIST AI RMF plus shared AI Lexicon for financial services. | Pushes banks and insurers toward consistent categorization of AI use cases, including agents, and governance alignment with prudential and consumer-protection rules.2Treasury Releases Two New Resources to Guide AI Use in the Financial Sector |

| NAIC AI Model Bulletin (2023) | Requirements for insurers' AI governance, including data management, third-party oversight, and documentation. | Mandates inventories and governance structures for AI agents in underwriting, pricing, and claims workflows.4March 2025 |

| Colorado Regulation 10-1-1 (2023) | Governance and risk management for life insurers using external consumer data and algorithms. | Requires detailed mapping of data sources, models, and decisions-key for interoperable, auditable agent workflows.5Regulation 10-1-1 - GOVERNANCE AND RISK MANAGEMENT FRAMEWORK REQUIREMENTS FOR LIFE INSURERS' USE OF EXTERNAL CONSUMER DATA AND INFORMATION SOURCES, ALGORITHMS, AND PREDICTIVE MODELS |

| Florida HB 527 (pending, 2026) | Human-in-the-loop requirements for AI-assisted claims handling. | Establishes human oversight and record-keeping for AI-influenced decisions, limiting how much agents adjudicate claims autonomously.6House Bill 527 (2026) |

| State AI / data-driven lending laws (various) | Bias, transparency, and data-use rules for AI in credit and employment. | Expands interoperability needs in workflows like credit underwriting and fraud detection, where agents use shared data and third-party scores.8The Evolving Landscape of AI Regulation in Financial Services |

Together, these measures drive banks and insurers toward standardized AI system descriptions, improved data governance, and verifiable human oversight-foundations for rigorous interoperability standards for AI workflow agents.

Why Interoperability of AI Workflow Agents Has Become a Supervisory Priority

AI workflow agents operate across multiple systems, executing orchestrated tasks such as know-your-customer (KYC) checks, underwriting, fraud investigations, and claims triage.

Insurance case studies show that multi-step agentic workflows can reduce onboarding time and improve accuracy when integrated with CRM and legacy policy systems, while maintaining audit trails.9Automating KYC Triage with AI Workflow Agents | Yattir Labs These gains drive interest in agentic automation.

However, interoperability gaps have emerged as systemic risk areas:

- Fragmented APIs and data models. A 2026 review of financial APIs identified more than 80 frameworks in use across banks and fintechs in a single jurisdiction, lacking schema or authentication convergence.10The State of Financial APIs in 2026: 80+ Frameworks, Zero Convergence, and Why AI Agents Aren't Ready - DEV Community This complicates controls for agents operating across institutions.

- Opaque cross-vendor agent communications. Open standards such as Agent2Agent (A2A) are normalizing agent discovery and messaging across vendors and runtimes. By mid-2025, the Linux Foundation's A2A project reported backing from over 100 firms as an open protocol for secure agent-to-agent communication.11Agent2Agent Regulators are monitoring such protocol adoption.

- Limited governance maturity. A 2024 benchmarking survey of more than 200 financial sector compliance leaders found that only 32% had an AI governance committee, and just 12% applied a formal AI risk management framework, despite widespread AI tool deployment.12AI Transformation Roadmap for Financial Institutions: A Compliance-First Approach This governance lag is more acute as AI agents manage full workflows.

Regulators are less concerned with individual model intelligence and increasingly focused on cumulative risk from agents chaining actions-API calls, data enrichment, routing, and automated decisions-without clear, interoperable controls. Supervisors now routinely ask:

- Which agents handled specific data, in what sequence, and under which policies?

- Are agent permissions and capabilities specified in standardized, machine-readable, auditable formats?

- How is human oversight enforced when decisions cross organizational boundaries (e.g., bank-insurer partnerships, correspondent banking, reinsurance chains)?

These questions link interoperability standards with regulatory priorities: consistent data governance, explainability, and operational resilience.

Emerging Building Blocks of an Interoperability Standard

No unified or binding interoperability standard for AI workflow agents exists yet in US financial services, but several technical and governance elements are becoming common.

Standardized agent metadata and packaging

New open specifications are defining how to represent AI agents in portable, auditable formats:

- Agent manifests and identity. Initiatives like Manifest YAML specify agent identity, permissions, behavior, and trust attributes in machine-readable files, with public registry APIs for discovery.13Manifest YAML - Agent Identity Protocol

- Agent packaging standards. The Agent Packaging Standard (APS) outlines a vendor-neutral package format, including an

agent.yamlmanifest and registry API, supporting signing and provenance.14Agent Packaging Standard (APS) - Agent Packaging Standard (APS)

Regulatory evaluation of interoperability will likely prefer:

- Machine-readable metadata describing an agent's functions, inputs/outputs, autonomy, and controls.

- Registry-based discovery and attestation, ensuring only approved agents function in critical workflows.

Data provenance, lineage, and evidentiary controls

Research and policy efforts are bringing data governance into AI assurance:

- AI risk-assurance models propose bills of materials and machine-readable records of data sources, security testing, and controls.15AI Bill of Materials and Beyond: Systematizing Security Assurance through the AI Risk Scanning (AIRS) Framework

- "Policy Card" models encode allow/deny rules, obligations, and links to frameworks like NIST AI RMF and ISO/IEC 42001.16Policy Cards: Machine-Readable Runtime Governance for Autonomous AI Agents

NAIC guidance and state regulation already require documentation of data lineage, quality controls, and ongoing model monitoring for third-party AI systems.4March 2025 Applying these requirements to agents means:

- Traceable end-to-end lineage from source data through agentic transformations to outcomes.

- Tamper-evident logs and artifacts that support audits even for non-deterministic models.

Workflow mapping and risk-appetite alignment

Regulators emphasize that institutions must identify where AI sits in business processes and how that fits risk appetite:

- Treasury's FS AI RMF recommends mapping AI use cases to business and regulatory requirements, then rating criticality and risk.2Treasury Releases Two New Resources to Guide AI Use in the Financial Sector | U.S. Department of the Treasury

- Colorado Regulation 10-1-1 mandates inventories documenting algorithmic impact on consumer outcomes.5Regulation 10-1-1 - GOVERNANCE AND RISK MANAGEMENT FRAMEWORK REQUIREMENTS FOR LIFE INSURERS' USE OF EXTERNAL CONSUMER DATA AND INFORMATION SOURCES, ALGORITHMS, AND PREDICTIVE MODELS | State Regulations | US Law | LII / Legal Information Institute

For workflow agents, this requires:

- Tiered agent risk classifications (informational, decision-support, decision-making) with corresponding controls.

- Confirmation that autonomy, escalation thresholds, and fallbacks are set to risk appetite, particularly in underwriting, fraud, and claims.

Toward interoperable registries and supervisory tooling

Globally, some financial-inclusion bodies are piloting registry systems-using centralized records and analytics pipelines for RegTech supervision of distributed agent networks.17FINANCIAL INNOVATIONS The architecture parallels emerging AI agent systems.

Private-sector efforts are developing decentralized registries for autonomous agents, featuring on-chain discovery, usage tracking, and trust scoring.18x402 API Registry - Decentralized API Marketplace Regulators will likely consider interfaces between supervisory technology and such registries to enable real-time agent oversight.

Operational and Architectural Impacts for Banks and Insurers

Interoperability expectations are shaping technology strategies in banking and insurance.

Architecture, integration, and vendor strategy

Institutions with fragmented AI adoption face:

- Heterogeneous interfaces. Vendors provide different APIs, events, and logging formats, hindering unified oversight of agents across banking, CRM, and policy systems.

- Vendor lock-in vs. open protocols. Initiatives like A2A, Model Context Protocol (MCP), and open manifest/package standards offer alternatives to proprietary orchestration but require thorough security and compliance review.11Agent2Agent

- Legacy systems. Core platforms often lack machine-readable agent metadata or provenance, necessitating middleware for log normalization and enrichment.

Banks and insurers modernizing AI in banking or AI in insurance should prioritize:

- Contracts requiring standards-based agent metadata and registry access.

- Assessment of vendor support for open interoperability standards versus reliance on proprietary frameworks.

Governance, controls, and assurance

As workflow agents expand in scope, risk and compliance reviews must progress beyond individual models:

- System-level assurance. Regulators may require evidence that full workflows-including policies, routing, approvals, and message flows-operate within controlled parameters.

- Unified data governance. Data quality, lineage, and access must be consistent across internal and partner systems, supporting data-governance mandates.

- Human oversight policies. Measures like Florida's claims-handling standards illustrate moves to establish minimum human-in-the-loop requirements for decisions affecting consumers.6House Bill 527 (2026)

Organizations demonstrating standardized, interoperable agent controls can expect more efficient supervisory reviews and lower remediation costs.

Actionable Steps for CIOs, CTOs, and Risk Leaders

With regulatory expectations evolving, several steps are recommended for institutions deploying AI workflow agents.

1. Establish an agent-centric inventory and classification

- Create a central inventory of all AI workflow agents and automation components, both internal and third-party.

- Classify agents by:

- Business process (e.g., credit underwriting, claims, KYC, fraud).

- Criticality and consumer impact.

- Autonomy (assistive, decision-support, decision-making).

- Link each agent to relevant regulatory frameworks (NIST AI RMF, FS AI RMF, NAIC, and state laws).

2. Map end-to-end workflows and control points

- Document complete workflows involving agents, covering upstream data and downstream processes.

- Identify critical controls, including:

- Data quality and lineage requirements.

- Human approvals for high-impact actions.

- Obligations from data sharing and open APIs.

3. Align AI governance with NIST and Treasury frameworks

- Use NIST AI RMF and FS AI RMF to benchmark governance across GOVERN, MAP, MEASURE, and MANAGE functions.

- Integrate AI workflow governance with model risk, operational risk, and data governance efforts.

- Target governance gaps affecting high-risk agents first.

4. Adopt or pilot standardized agent metadata and packaging

- Require all agents to use machine-readable manifests matching emerging standards.

- Evaluate open packaging specifications (e.g., APS) to reduce integration friction and support registry plans.14Agent Packaging Standard (APS) - Agent Packaging Standard (APS)

- Ensure logging and monitoring allow audit teams to reconstruct agent behavior from standardized records.

5. Prepare for registry and interoperability requirements

- Build internal agent registries cataloging approved agents, risk classifications, and permissions.

- Enable metadata and evidence exposure through secure APIs, with future external registry connectivity in mind.

6. Strengthen cross-functional oversight

- Formalize AI governance committees including technology, risk, compliance, legal, and business, addressing current gaps in structures.12AI Transformation Roadmap for Financial Institutions: A Compliance-First Approach

- Define decision rights for agent approval, changes in autonomy, and integration with critical systems.

Proactive steps can minimize future compliance costs and strengthen automated workflow resilience and transparency.

Frequently Asked Questions

How do interoperability standards for AI workflow agents differ from traditional model risk guidance?

Traditional model risk guidance (e.g., SR 11-7) emphasizes development, validation, and use of individual models.3SR 11-7 Model Risk Management for AI — GLACIS Interoperability standards expand this to the full chain of actions and systems where agents operate, including:

- Agent API interactions across internal and external systems.

- Alignment of data schemas and identifiers across organizations.

- Consistent enforcement of permissions, oversight, and audit trails, regardless of vendor.

Model risk management remains critical but must be complemented by cross-system behavior and control standards.

Which regulatory frameworks should banks and insurers prioritize when designing AI agent architectures?

Key frameworks include:

- NIST AI RMF 1.0 for baseline AI risk management.

- Treasury's FS AI RMF and AI Lexicon for sector-specific guidance aligned with NIST and supervisory standards.

- NAIC AI Model Bulletin and state rules such as Colorado Regulation 10-1-1 for insurers, and Florida's HB 527 for claims handling.2Treasury Releases Two New Resources to Guide AI Use in the Financial Sector | U.S. Department of the Treasury

Architecture choices for AI workflow agents should demonstrate alignment with these frameworks, focusing on governance, data controls, human oversight, and documentation.

How will tighter interoperability expectations affect vendor lock-in for AI platforms?

Interoperability standards can lower vendor lock-in by promoting standardized agent descriptions and communication protocols, simplifying platform migration or coexistence. Initiatives like A2A, MCP, and agent-packaging standards reflect this direction.11Agent2Agent

However, lock-in risks remain if standards are implemented in vendor-specific ways or through proprietary extensions. Procurement and architecture teams should:

- Assess adherence to open specifications.

- Avoid proprietary features limiting agent portability.

- Require contractual guarantees for interoperability and data portability.

What role will human oversight play in future standards for AI workflow agents?

Legislation and guidance indicate mandatory human oversight for high-stakes decisions. Florida's HB 527, for example, bans claim denials based solely on AI output and mandates qualified human review and documentation.6House Bill 527 (2026)

Future standards are likely to codify:

- Machine-readable markers for human sign-off within workflows.

- Escalation and fallback logic when ambiguity arises.

- Requirements to document that human reviews occurred.

How should institutions balance agility in AI deployment with growing interoperability and governance requirements?

Early adopters demonstrate that agility and governance are compatible when AI workflow agents are built using modular, standards-aligned architectures. Investments in:

- Centralized agent registries and manifests.

- Standardized logging and data provenance.

- Cross-functional governance committees.

enhance scalability and regulatory adaptability. Reliance on bespoke, non-interoperable agents increases long-term compliance and integration costs as standards evolve.