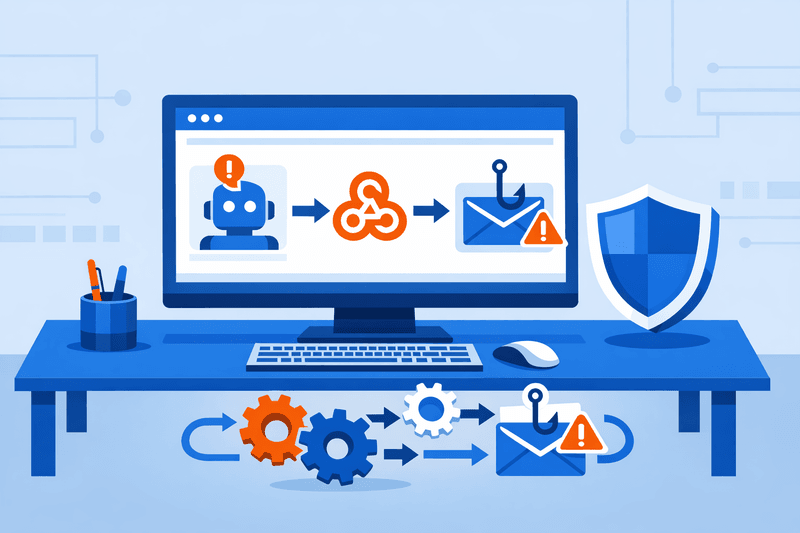

Cisco Talos has documented active abuse of n8n, a widely adopted AI workflow automation platform, to deliver malware and conduct device fingerprinting campaigns - a development that signals a broader shift in how threat actors exploit enterprise-grade automation tooling.

Talos research uncovered agentic AI workflow automation platform abuse in emails, identifying an increase in malicious emails abusing n8n from as early as October 2025 through March 2026. The volume of emails containing n8n webhook URLs in March 2026 was approximately 686% higher than in January 2025. As with other legitimate tools, AI workflow automation platforms can be weaponized to orchestrate malicious activities, including delivering malware via automated emails.

Background

The cybersecurity landscape is undergoing a significant shift. A major evolution emerged between January and February 2026 in how threat actors adopt, weaponize, and operationalize AI - and what enterprises embraced for productivity has simultaneously become a rapidly expanding attack surface.

Gartner projects that 40% of enterprise applications will incorporate task-specific AI agents by end of 2026. The productivity and competitive gains from agentic AI are real, and enterprises moved quickly to capture them - often before the identity, security, and governance frameworks needed to manage autonomous systems were in place. The technology outran the controls.

As AI adoption surged from 2023 to 2025, teams across the enterprise quietly deployed private or third-party LLMs outside official oversight. By 2026, these shadow models represent a significant and largely invisible attack surface, introducing unmonitored data flows, unknown training retention, and inconsistent access controls.

Details

The n8n abuse campaign illustrates a key technical mechanism now available to attackers. Because webhooks mask the source of the data they deliver, they can serve payloads from untrusted sources while appearing to originate from a trusted domain. Since webhooks can dynamically serve different data streams based on triggering events, a phishing operator can tailor payloads based on user-agent header information.

Rather than relying on reusable templates flagged by email filters, attackers generate messages tailored to each target and role. Tools such as EvilTokens support AI workflows that help bypass email filtering, tailor phishing lures, and identify sensitive emails for wire fraud or data exfiltration.

The scale of the underlying threat is well-documented. Cofense research identified one malicious email on average every 19 seconds during 2025. Acronis' Cyberthreats Report for H2 2025 found email-based attacks rose 16% per organization and 20% per user year on year, with phishing accounting for 83% of all email threats and 52% of attacks targeting managed service providers. According to Acronis CISO Gerald Beuchelt, "attackers are not only scaling traditional methods like phishing and ransomware, but are leveraging AI to act faster, more efficiently, and at greater scale," as "attackers are increasingly integrating AI into their operations."

According to the 2026 Agentic AI Security Report by Arkose Labs - based on a global survey of 300 enterprise leaders - 97% of respondents expect a material AI-agent-driven security or fraud incident within the next 12 months, with nearly half expecting one within six months. Yet only 6% of security budgets are currently allocated to this risk.

Incident response frameworks are struggling to keep pace. As AI agents participate in multi-step workflows across systems and services, incident investigations grow significantly more complex. The ability to reconstruct what an autonomous system did - across which APIs, through which credentials, to which outcomes - will determine whether an organization can respond effectively and demonstrate accountability to regulators.

Data governance is an equally pressing concern. Identity and access management risks will expand dramatically as agents require broad, cross-environment permissions; compromised credentials, SSO platforms, or agent identities could enable large-scale service disruption or data exfiltration. The EchoLeak vulnerability in Microsoft 365 Copilot demonstrated that a zero-click prompt injection could access and silently exfiltrate enterprise data. When AI agents operate independently with full system access, prompt injection is no longer a chatbot trick - it becomes a tangible attack vector.

Outlook

Security analysts anticipate that identity security will shift toward "agent identity governance," requiring lifecycle management, least-privilege enforcement, and behavioral monitoring for AI agents comparable to those applied to human users. Prompt injection is expected to evolve into a mainstream enterprise attack technique, with threat actors increasingly prioritizing the manipulation of AI agents over deploying traditional malware.

Organizations navigating this era effectively are not waiting for formal regulatory frameworks. They are building visibility, attribution, and classification capabilities now - because in the era of agentic AI, resilience depends on operational readiness, not policy intent.